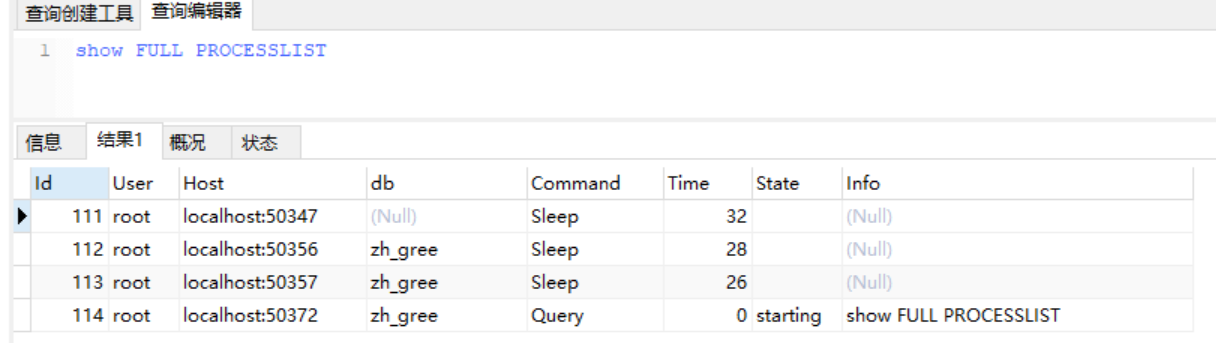

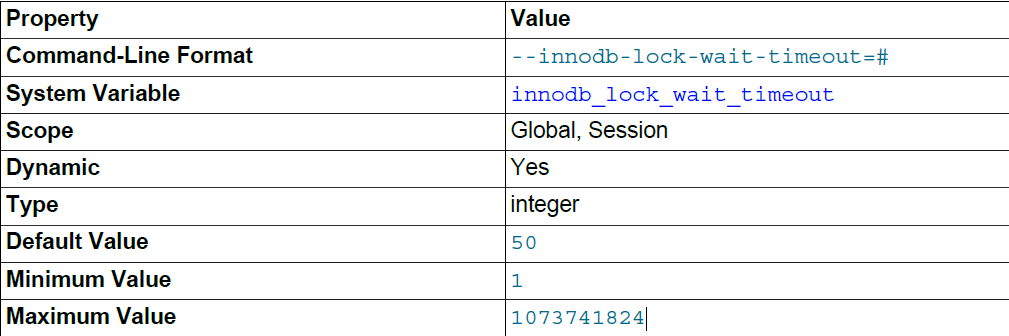

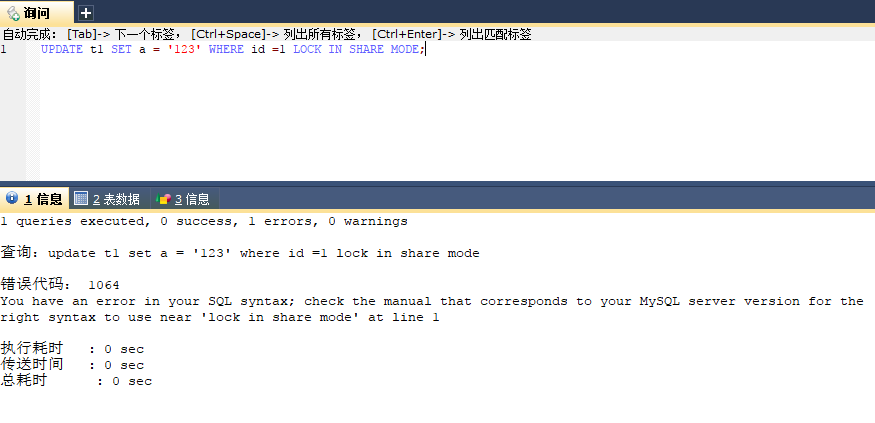

I'm not looking to increase the timeout value since it used to run in less than a second to update these fields, so not quite sure what's changed since then. So far, I'm thinking it's a mySQL problem, and not a Django one, and have half a mind to start over with a new database. I've considered it being a size problem, but I only have 4 entries so far in my table for 'Clients', and none of them store any sort of large data. The end gets cut off in the table, but I'm assuming it has the rest there. Local_ip = '2.2.2.2', vpn_ip = NULL, type = + 3042268: SQLSTATEHY000: Ge neral error: 1205 Lock wait timeout exceeded try restarting transaction The SE accepted answer and that article it links to, and existing core issues all say to use read committed. Updating | UPDATE openvpn_client SET name = 'rsa_test1', ERROR 1205: 1205: Lock wait timeout exceeded try restarting transaction SQL Statement: UPDATE Search. The model only has 7 fields, describing VPN servers I'm cataloguing. Why is it occurring all of sudden mysql mysql-5. I don't know what to do with this error to stop. The query timeout limit only applies to executing queries. Lock wait timeout exceeded try restarting transaction which looks like never stop ending. Note: Setting a query timeout in Resource Governance will not affect idle transactions. I can kill the process while it's going, but it's the only running query, and not even a big one at that. If the session timeout value is set lower than the lock wait timeout (default 60 seconds), then idle connections will not cause lock wait timeouts to be breached. When multiple batch processes run on the same time I got the following error: : () (1205, 'Lock wait timeout exceeded try restarting transaction') It is not related to aws batch, as same problem. If I run one batch process no problem occurs. Lock wait timeout exceeded try restarting transaction 51. Each batch handles its own distinct set of rows. insert into inventoryfiles (id, proid) values (30,6569) I get the following error. Getting "Lock wait timeout exceeded try restarting transaction" even though I'm not using a transactionīut it doesn't solve the problem. I am inserting a data into one of my tables, and I keep getting a lock. I've looked into it and can kill the process after following this link: I've disabled any signal handling (post/presave) I had setup, but I think just the UPDATE call after hitting save changes is triggering this issue. Just editing and hitting save in via the admin interface is triggering this issue. Also, SHOW ENGINE INNODB STATUS doesn't show that there is a locked tables or rows. The problem seems to be it failing to update and timing out. The database is Aurora Serverless 2.08.3 and there were no configuration changes (innodblockwaittimeout, etc.) from my side (not sure if there is from AWS itself).

Earlier today, it was working, and I've been rolling back changes to the last working version to try to narrow down what seems to be the issue. The timeout for waiting a lock is defined by the.

I'm still a little new to Django, and I'm getting (1205, 'Lock wait timeout exceeded try restarting transaction') when I try to update my model via the admin interface. In the pessimistic transaction mode, transactions wait for locks of each other.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed